I'm occasionally asked why C++ programmers conventionally use .cpp and .hpp files, what they use them for and what happens if they don't. On the spot I'll usually come out with the template answer that conventions exist for a reason, but I thought I might as well take a moment to explain more fully the reasoning behind this one.

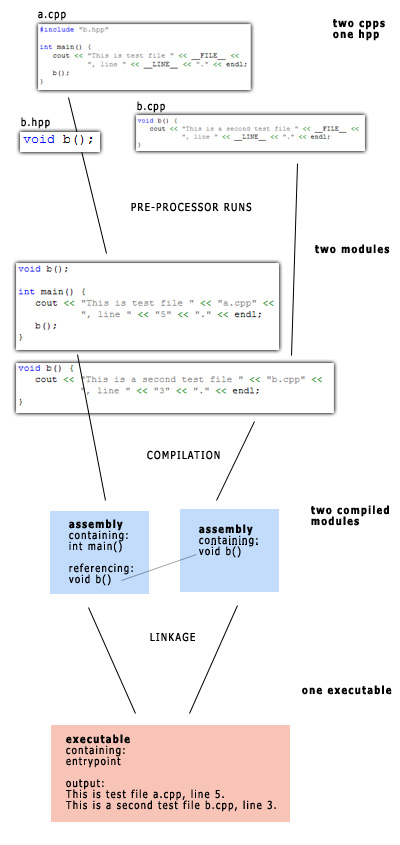

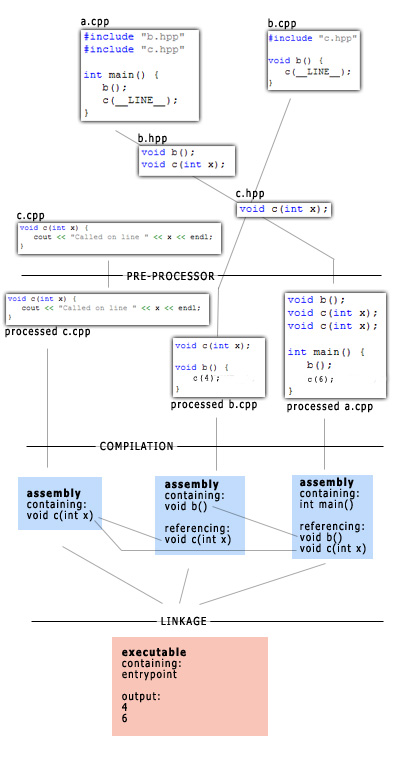

To understand the pitfalls of straying from convention, one must first grasp the complexity of compiling a C++ project. In fact, when you turn your source code into an executable, you are not merely compiling it. Many steps are involved, and I've attempted to illustrate them below.

(note: I have not included iostream in the above code, as when pre-processed it would expand to many thousands of lines of code. However, you would need to do so in order to successfully compile due to the reference to cout. Also, it doesn't really matter what the files are called; they don't even have to have the .cpp and .hpp extensions, although graphical code editors prefer it.)

Notice that a.cpp uses a function void b() that's defined in b.cpp, but that right up until linkage it has no knowledge of its contents. The compiled contents of a.cpp have no idea what the compiled contents of b.cpp do.

This is what the linker does. It takes the assembly code of each compiled module and joins up all the function calls. It makes sure that when a.cpp asks for void b(), that function is somewhere in one of the other modules. In this case, it is: it's in the compiled b.cpp.

Function linkage

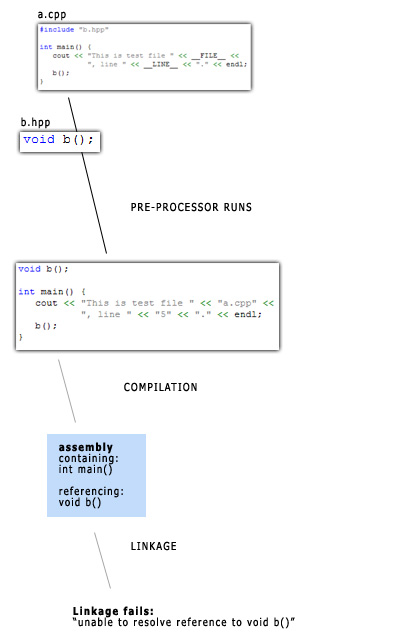

What would happen if b.cpp weren't included in the project? If it weren't compiled and subsequently linked in to the finished executable?

You get a linker error, telling you that the function definition couldn't be found and the executable couldn't be created.

So if void b() wasn't defined, why did compilation succeed?

Declaration vs Definition

The compilation stage succeeded because, until linkage, all the compiler needs to know is that void b() exists, somewhere. It doesn't need to know what the function does, but it needs to know that somewhere it exists and that the linker will take care of the rest at the very end of the process.

This is why our processed code has "void b();" at the top. This is called a declaration and says, "you may use this function; it's defined somewhere else". You can have as many declarations for the same function as you like ("you already know this, but you may use this function") but only one definition.

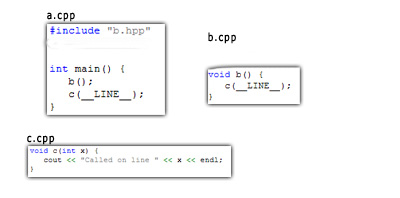

Why is this all so complicated? Consider this case:

Both a.cpp and b.cpp want to use the same function void c(int x), which is defined in c.cpp. We could simply have two versions of the same function, but that would mean updating both copies whenever we wanted to make a simple change to it. This is a trivial example, but as projects grow and code becomes more complex, it's extremely common to find that more than one module wants access to the same function.

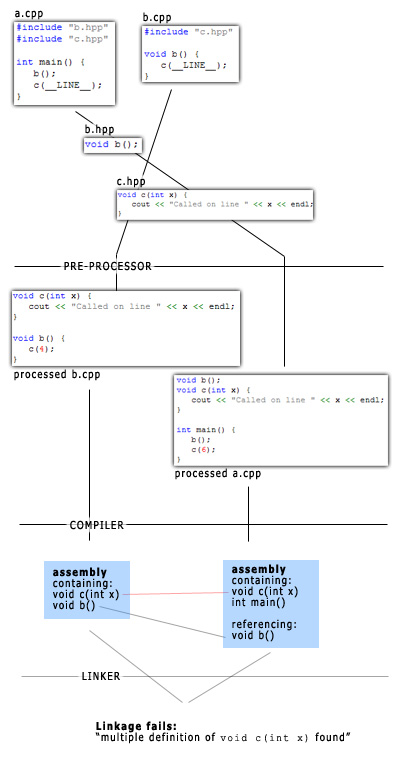

Defining this function in a header file and including that header in a.cpp and b.cpp would result in trouble:

When linking up call to void c(int x) and the actual definition of it, the linker finds that it can see multiple to choose from. It can't simply ignore all but one definition because they might differ.

But because we are allowed to declare functions as often as possible, we can simply declare void c(int x) in a header file, include it wherever we might need it and let the linker locate the single definition, found in c.cpp.

Conclusion

It's very difficult to explain why this convention is in many cases the best way out of dependency hell, but hopefully I've given an impression of how the C++ compilation/linkage process works and demonstrated that declaring in headers, defining in source is a very good rule of thumb to follow.

Bootnote

I can't be assed to fix this now as it's unrelated to the purpose of this post, but can anyone spot the bug in my last couple of examples?